AI Augmented Security Engineer

Most teams can’t afford a principal security engineer. The ones that have one are stretched and cover more than 79 developers. Trent is the security expert that’s always there. Continuously learning, staying current as the stack evolves, and scaling with your team without adding headcount.

Specialized Agents. One Continuous Loop.

Trent AI’s Agentic Security Solution is a single, unified offering. Rather than a patchwork of disconnected security tools, it delivers one self-reinforcing system composed of specialized agents, each doing one job exceptionally well, and every cycle through the system making the next one smarter.

Threat Scanning Agent

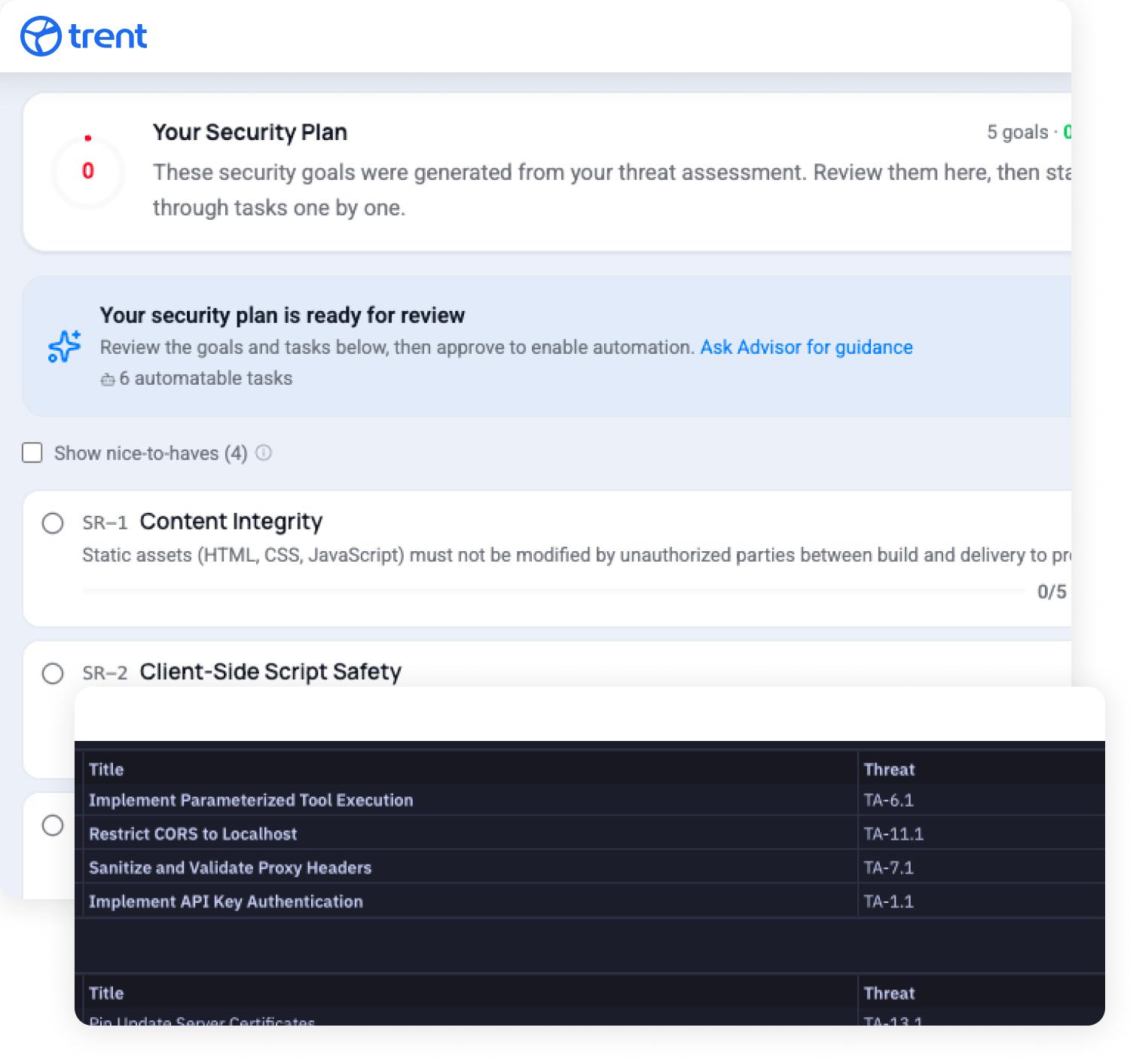

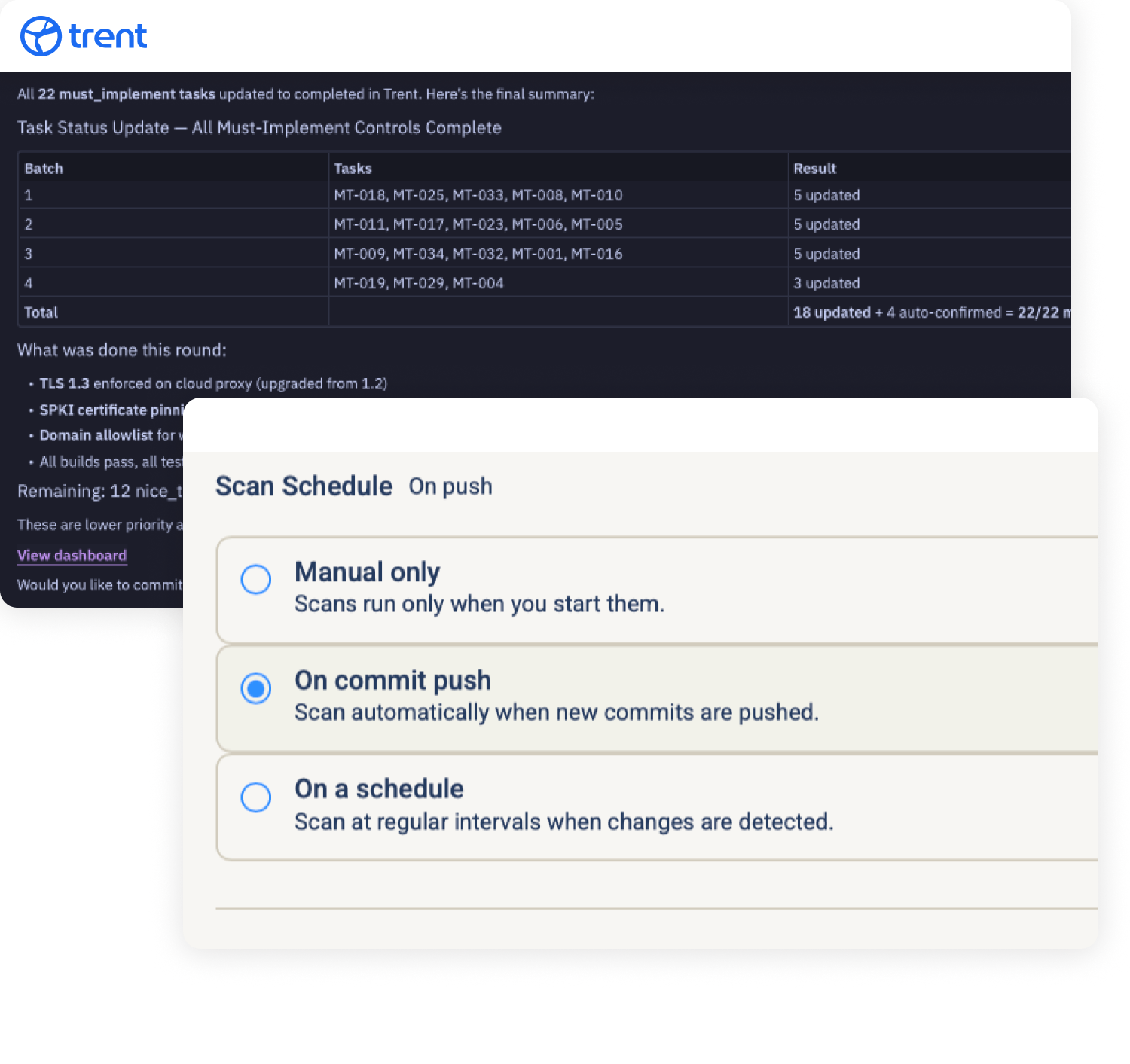

Continuously observe your agents, code, infrastructure, and dependencies. Scanning agents learn where to look for risks and what matters in each environment. Whether you have legacy code, work in traditional CI/CD workflows, or are building agentic systems, Trent’s scanning agents reduce noise over time, focus attention on high-risk surfaces, and flag increasingly high-signal observations.

Analysis Agent

Judging agents classify signal vs. noise, assess business impact, and prioritize based on real risk rather than static rules. As they accumulate context across environments and historical outcomes, their judgments become sharper and more predictive.

Remediation Agent

Mitigation agents patch vulnerabilities, open pull requests, adjust configurations, and validate that fixes actually work. Because they observe which fixes succeed and which fail, they continuously improve their effectiveness within each customer’s stack.

Security Posture Agent

Evaluation agents track trends, quantify risk over time, benchmark against standards, and identify systemic weaknesses. As the system compounds data, these agents become increasingly good at forecasting where risk will emerge next, informing smarter scanning and tighter prioritization.

Every Cycle Makes the Next One Smarter.

Each pass through the loop feeds intelligence back into the next: Scan learns where to look from Evaluate’s forecasts. Judge gets sharper from Mitigate’s outcomes. Mitigate improves from watching which fixes hold. The result is security that gets more precise, faster, and more effective the longer it runs, compounding alongside your agents instead of falling further behind.

The Same Engine. Wherever You Build.

The agent loop runs the same way everywhere. What changes is how you connect and where fixes land. Start wherever makes sense for your team.

Connect your repositories or agent definitions. Trent learns your architecture and continuously re-scans, re-judges, re-mitigates, and re-evaluates as your agent and agentic code evolves.

Connect your GitHub repository. Trent scans your project, builds a fix plan, and applies fixes directly through Lovable via MCP.

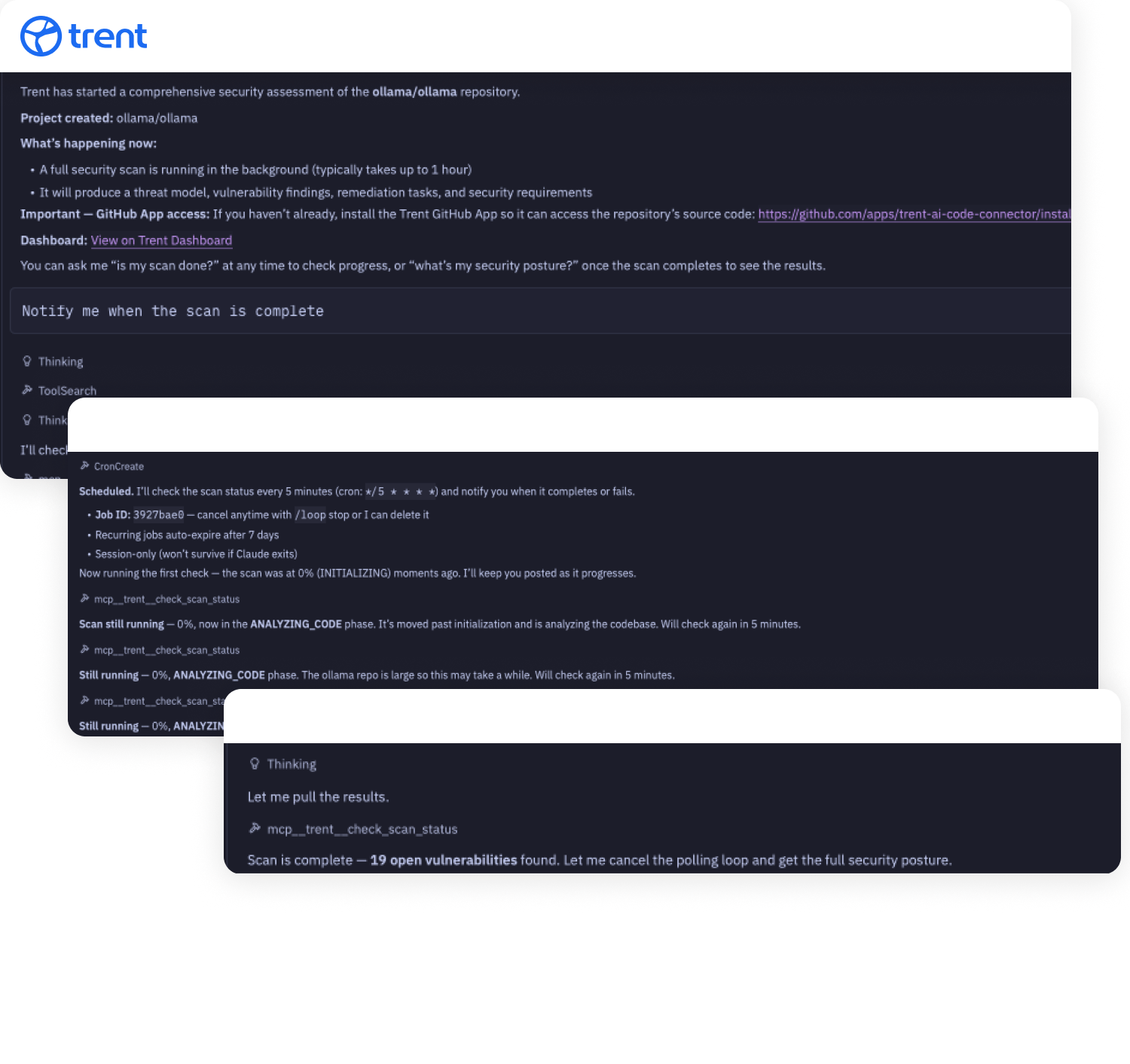

Install Trent’s MCP server into Claude Code. Security assessments run directly in the IDE, and the fix plan feeds into Claude Code’s task system.

Install Trent as a skill inside OpenClaw. Run security assessments directly from your environment without leaving the tools you already use.

Your AI application reasons, plans, and acts. Trent finds the risks traditional AppSec tools were never designed to see and secures the new threat surfaces your agentic systems create.

Build Agentic. Stay Secure.

Connect your environment. Your security compounds from day one.

FAQs

What is an AI security solution?

An AI security solution protects the AI systems your team builds and deploys, not just the code underneath them. For agentic systems specifically, that means securing agent behavior, tool permissions, data flows, and the emergent risks that arise when autonomous components interact. Trent AI delivers this through specialized agents that work in a continuous loop: scanning, judging risk, fixing issues, and evaluating your overall security posture.

How is this different from traditional application security tools?

Traditional tools (SAST, DAST, SCA) find known code-level issues, flagged patterns, insecure dependencies, known vulnerabilities. They can’t reason about agent behavior, prompt-driven logic, or the risks created when AI agents call APIs, chain tools, and act on behalf of users. Trent AI is purpose-built for agentic systems. It assesses your environment in context, not just your code in isolation.

What is the difference between “AI for Security” and “Security for AI”?

“AI for Security” uses AI to improve traditional cybersecurity: better threat detection, faster SOC triage, automated incident responses. “Security for AI” protects the AI systems themselves: the agents, models, and autonomous workflows your team builds. Trent AI is Security for AI. We secure the agents you deploy.

How do the agents work together?

Scan agents continuously observe your environment and identify risks. Judge agents determine which findings represent real threats and prioritize by business impact. Mitigate agents act on prioritized risks: patching, opening PRs, adjusting configurations. Evaluate agents step back and assess the system as a whole, tracking trends and forecasting where risk will emerge next. Each cycle feeds intelligence back into the next, so the system compounds over time.

Do I need to give Trent access to my source code?

You can start with a URL-only assessment that analyzes your application from the outside. For deeper analysis, connect a source code repository, agent definitions, or design documents. You control what level of access to provide. The agent loop runs at whatever level of access you choose.

What types of applications does Trent secure?

Trent secures traditional web applications, AI-powered applications, and agentic systems. Whether you have legacy code in CI/CD pipelines, vibe-coded projects in Lovable, agent workflows in OpenClaw, or codebases in Claude Code, the same agent loop runs across all of them.